Hadoop is an open-source framework designed for distributed storage and processing of large volumes of data across clusters of computers. It emerged as a solution to handle the growing challenges of storing and analyzing big data effectively. At its core, Hadoop comprises two primary components: the Hadoop Distributed File System (HDFS) and MapReduce.

HDFS is a distributed file system that allows data to be stored across multiple machines in a Hadoop cluster, enabling high scalability and fault tolerance. MapReduce is a programming model for processing and generating large datasets in parallel across the cluster.

One of Hadoop’s key strengths lies in its ability to process vast amounts of unstructured and semi-structured data, which traditional databases might struggle to handle efficiently. It’s highly fault-tolerant, as data is replicated across various nodes, reducing the risk of data loss in case of hardware failures.

Hadoop’s ecosystem has expanded significantly beyond its core components. It now includes various complementary tools and frameworks like Hive, Pig, HBase, Spark, and others. For instance, Hive provides a SQL-like interface to query and analyze data stored in Hadoop, while Spark offers faster in-memory data processing capabilities compared to MapReduce.

The flexibility and scalability of Hadoop have made it a cornerstone of big data processing in industries ranging from finance and healthcare to retail and technology. Its open-source nature has encouraged a vibrant community of developers and contributors, continuously enhancing and evolving the platform to meet the changing demands of the big data landscape.

However, while Hadoop revolutionized big data processing, newer technologies and paradigms like cloud-based solutions, NoSQL databases, and faster data processing engines have emerged, leading to a shifting landscape where organizations combine various tools and platforms to handle modern data challenges efficiently.

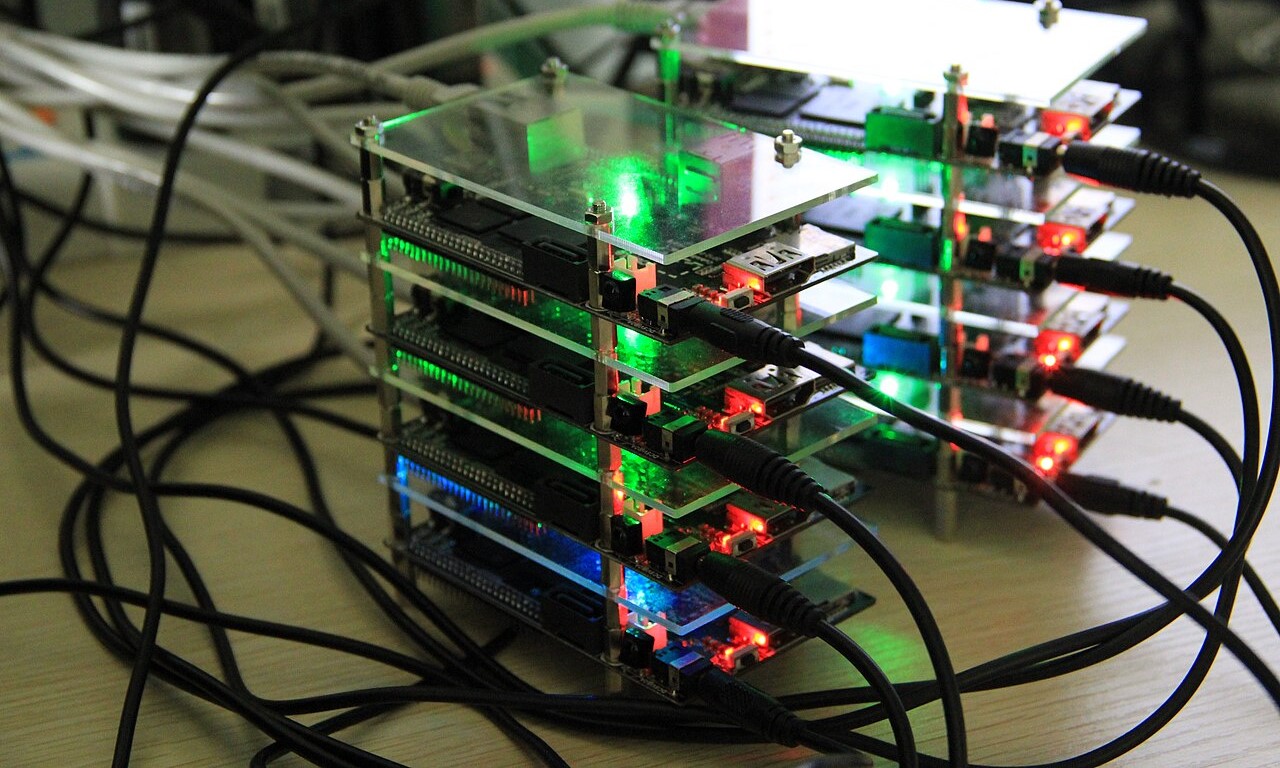

A Hadoop computer cluster

It’s a good idea to look at these 28 interesting facts about Hadoop to know more about it.

- Inspired by Google: Hadoop was inspired by Google’s MapReduce and Google File System (GFS) papers, which outlined their approach to processing large-scale data.

- Named After a Toy Elephant: The name “Hadoop” comes from the name of a toy elephant belonging to the son of one of its creators, Doug Cutting.

- Created by Doug Cutting and Mike Cafarella: Hadoop was developed by Doug Cutting and Mike Cafarella in 2005, and it became an Apache open-source project in 2008.

- Apache Software Foundation Project: Hadoop is an open-source project under the Apache Software Foundation, encouraging collaboration and innovation among developers.

- Core Components: The core components of Hadoop include Hadoop Distributed File System (HDFS) for storage and MapReduce for processing data in parallel.

- Scalability: Hadoop is highly scalable, allowing organizations to expand their storage and processing capabilities by adding more nodes to the cluster.

- Fault Tolerance: It ensures fault tolerance by replicating data across multiple nodes, reducing the risk of data loss in case of hardware failures.

- Java-Based Framework: Hadoop is primarily written in Java, making it accessible and adaptable for a wide range of developers.

- YARN (Yet Another Resource Negotiator): YARN replaced the JobTracker and TaskTracker components in Hadoop 2, offering a more flexible resource management system.

- Hadoop Ecosystem: The Hadoop ecosystem includes various tools and frameworks like Hive, Pig, HBase, Spark, Kafka, and more, catering to different data processing needs.

- Big Data Processing: Hadoop is well-suited for processing vast amounts of unstructured and semi-structured data, providing a solution for big data challenges.

- Open Source Community: Hadoop’s open-source nature encourages a collaborative community of developers, fostering innovation and continuous improvement.

- Adoption in Enterprises: Many large enterprises across various industries, including tech, finance, healthcare, and retail, have adopted Hadoop for big data analytics.

- Cloud Integration: Hadoop has been integrated into various cloud platforms, making it more accessible and easier to manage for organizations.

- Machine Learning Integration: Hadoop has seen integration with machine learning frameworks, allowing organizations to perform complex data analysis and modeling.

- Adaptable to Multiple Data Types: Hadoop efficiently handles diverse data types, including text, images, videos, social media data, and more.

- Job Diversity: Hadoop clusters can run multiple types of jobs simultaneously, enabling parallel processing and efficient resource utilization.

- Challenges in Adoption: Despite its advantages, Hadoop adoption faces challenges related to complexity, skill requirements, and evolving data processing technologies.

- Evolution with Apache Spark: Apache Spark, known for its in-memory data processing and speed, has become an alternative to MapReduce in the Hadoop ecosystem.

- Structured and Semi-Structured Data Processing: Hadoop’s ecosystem tools like Hive and Pig enable the processing of structured and semi-structured data, making it more versatile.

- Streaming Data Processing: Tools like Kafka within the Hadoop ecosystem support real-time data streaming and processing, addressing the need for immediate insights.

- Security Enhancements: Hadoop has seen continuous improvements in security features, addressing concerns related to data privacy and access control.

- Batch Processing and Beyond: While Hadoop initially focused on batch processing, advancements in the ecosystem have enabled real-time and interactive data processing.

- Geospatial Data Handling: Hadoop has capabilities for handling geospatial data, supporting applications related to mapping, location-based services, and more.

- Cost-Effective Storage: Hadoop’s distributed storage system provides a cost-effective solution for storing massive amounts of data compared to traditional storage methods.

- Community Support and Resources: The Hadoop community offers extensive resources, forums, and documentation, aiding developers and organizations in leveraging the platform effectively.

- Cluster Management Tools: Various cluster management tools have emerged to simplify the deployment, monitoring, and management of Hadoop clusters.

- Continuous Evolution: Hadoop continues to evolve with advancements in technology, adapting to changing data processing needs and integrating with modern solutions.

Hadoop stands as a pioneering force that revolutionized the way we handle, store, and process vast amounts of data. Its inception sparked a data revolution, offering a scalable, fault-tolerant framework that democratized big data analytics. Over the years, Hadoop’s ecosystem has grown and evolved, encompassing a myriad of tools and frameworks to address diverse data processing needs. Despite the changing landscape of data technologies, Hadoop’s impact remains significant, leaving an enduring legacy in the realm of big data. Its open-source nature, adaptability, and contributions to data-driven decision-making continue to resonate across industries, laying the groundwork for modern data architectures and serving as a catalyst for innovation in the ever-evolving world of technology.